Autonomous and Robotic Vehicles (RVs) such as flying drones and roving vehicles are increasingly being used in industrial, warehouse and even space settings. Unfortunately, these vehicles are subject to both physical and cyber-attacks, even when they should be protected by path-monitoring systems.

Dash et al. developed and conducted three types of stealthy attacks on RVs. They assessed the effort required, the impacts on the subject RVs as well as the efficiency of the attacks. The attackers were assumed to be unaware the specifics of the RV, unable to delete system logs, unable to tamper with the device’s firmware or to gain administrator (root) access. They would however be able to compromise the RVs, snoop on control inputs and outputs and replace the libraries used in the RV software.

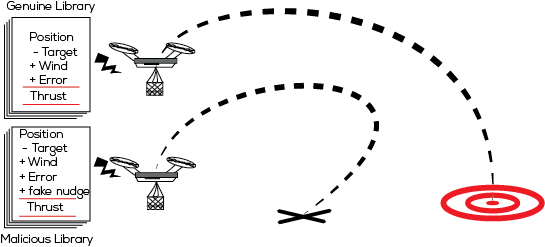

RVs generally draw from two common libraries of code for their control: sensor and inertial measurement functions. Replacing these libraries with ones containing malicious code allows an attacker to inject subtle changes to the RV’s interpretation of sensor input. The researchers identified three methods for attacking an RV: False Data Injection, Artificial Delay and Switch mode attacks. False Data injection alters the measurements from the robot's sensors to provide an altered image of the actual position of the RV. The Timing Delay attack performs resource-intensive operations, slowing time-critical functions and altering the timing behavior of the system. Switch Mode attacks perform a false data injection when the RV is switching between operation modes, such as from general flying mode to landing mode.

These attacks were stealthy and effective, allowing an attacker to push a drone off-course by 8 to 15 meters over a distance of 35~50 meters and to increase flight time by 30% to 50%. The switch mode attacks appeared to be particularly effective, preventing the drone from landing or causing it to crash. Results suggest that the preparation stage for such attacks could be automated, allowing self-learning malware to be developed.

The research presents the possibility of stealthy attacks against RVs that are effective against common methods of detection. The use of more complex detection methods, such as detection with variable detection windows, could increase the security of RVs.

Robotic Vehicles could be effectively attacked in a manner that is undetectable by inserting malicious code into software libraries.